Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

This article shows you how to use Azure OpenAI multimodal models to generate responses to user messages and uploaded images in a chat app. This chat app sample also includes all the infrastructure and configuration needed to provision Azure OpenAI resources and deploy the app to Azure Container Apps using the Azure Developer CLI.

By following the instructions in this article, you will:

- Deploy an Azure Container chat app that uses managed identity for authentication.

- Upload images to be used as part of the chat stream.

- Chat with an Azure OpenAI multimodal Large Language Model (LLM) using the OpenAI library's Responses API.

Once you complete this article, you can start modifying the new project with your custom code.

Note

This article uses one or more AI app templates as the basis for the examples and guidance in the article. AI app templates provide you with well-maintained, easy to deploy reference implementations that help to ensure a high-quality starting point for your AI apps.

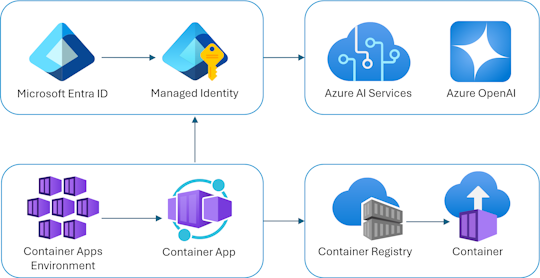

Architectural overview

A simple architecture of the chat app is shown in the following diagram:

The chat app is running as an Azure Container App. The app uses managed identity via Microsoft Entra ID to authenticate with Azure OpenAI in production, instead of an API key. During development, the app supports multiple authentication methods including Azure Developer CLI credentials and API keys.

The application architecture relies on the following services and components:

- Azure OpenAI represents the AI provider that we send the user's queries to.

- Azure Container Apps is the container environment where the application is hosted.

- Managed Identity helps us ensure best-in-class security and eliminates the requirement for you as a developer to securely manage a secret.

- Bicep files for provisioning Azure resources, including Azure OpenAI, Azure Container Apps, Azure Container Registry, Azure Log Analytics, and role-based access control (RBAC) roles.

- A Python Quart app that uses the

openaipackage to generate responses to user messages with uploaded image files. - A basic HTML/JavaScript frontend that streams responses from the backend using JSON Lines over a ReadableStream.

Cost

In an attempt to keep pricing as low as possible in this sample, most resources use a basic or consumption pricing tier. Alter your tier level as needed based on your intended usage. To stop incurring charges, delete the resources when you're done with the article.

Learn more about cost in the sample repo.

Prerequisites

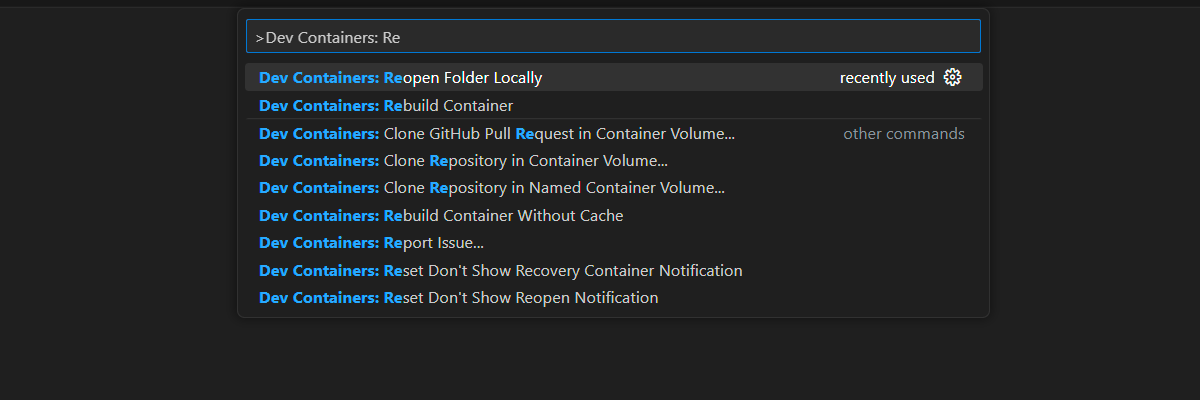

A development container environment is available with all dependencies required to complete this article. You can run the development container in GitHub Codespaces (in a browser) or locally using Visual Studio Code.

To use this article, you need to fulfill the following prerequisites:

An Azure subscription - Create one for free

Azure account permissions - Your Azure Account must have

Microsoft.Authorization/roleAssignments/writepermissions, such as User Access Administrator or Owner.GitHub account

Open development environment

Use the following instructions to deploy a preconfigured development environment containing all required dependencies to complete this article.

GitHub Codespaces runs a development container managed by GitHub with Visual Studio Code for the Web as the user interface. For the most straightforward development environment, use GitHub Codespaces so that you have the correct developer tools and dependencies preinstalled to complete this article.

Important

All GitHub accounts can use Codespaces for up to 60 hours free each month with two core instances. For more information, see GitHub Codespaces monthly included storage and core hours.

Use the following steps to create a new GitHub Codespace on the main branch of the Azure-Samples/openai-chat-vision-quickstart GitHub repository.

Right-click on the following button, and select Open link in new window. This action allows you to have the development environment and the documentation available for review.

On the Create codespace page, review and then select Create new codespace

Wait for the codespace to start. This startup process can take a few minutes.

Sign in to Azure with the Azure Developer CLI in the terminal at the bottom of the screen.

azd auth loginCopy the code from the terminal and then paste it into a browser. Follow the instructions to authenticate with your Azure account.

The remaining tasks in this article take place in the context of this development container.

Deploy and run

The sample repository contains all the code and configuration files for the chat app Azure deployment. The following steps walk you through the sample chat app Azure deployment process.

Deploy chat app to Azure

Important

To keep costs low, this sample uses basic or consumption pricing tiers for most resources. Adjust the tier as needed, and delete resources when you're done to avoid charges.

Run the following Azure Developer CLI command for Azure resource provisioning and source code deployment:

azd upUse the following table to answer the prompts:

Prompt Answer Environment name Keep it short and lowercase. Add your name or alias. For example, chat-vision. It's used as part of the resource group name.Subscription Select the subscription to create the resources in. Location (for hosting) Select a location near you from the list. Location for the Azure OpenAI model Select a location near you from the list. If the same location is available as your first location, select that. Wait until app is deployed. Deployment usually takes between 5 and 10 minutes to complete.

Use chat app to ask questions to the Large Language Model

The terminal displays a URL after successful application deployment.

Select that URL labeled

Deploying service webto open the chat application in a browser.In the browser, upload an image by clicking on Choose File and selecting an image.

Ask a question about the uploaded image such as "What is the image about?".

The answer comes from Azure OpenAI and the result is displayed.

Exploring the sample code

This sample uses an Azure OpenAI multimodal model to generate responses to user messages and uploaded images.

Base64 Encoding the uploaded image in the frontend

The uploaded image needs to be Base64 encoded so that it can be used directly as a Data URI as part of the message.

In the sample, the following frontend code snippet in the scripttag of the src/quartapp/templates/index.html file handles that functionality. The toBase64 arrow function uses the readAsDataURL method of theFileReader to asynchronously read in the uploaded image file as a base64 encoded string.

const toBase64 = file => new Promise((resolve, reject) => {

const reader = new FileReader();

reader.readAsDataURL(file);

reader.onload = () => resolve(reader.result);

reader.onerror = reject;

});

The toBase64 function is called by a listener on the form's submit event.

The submit event listener handles the complete chat interaction flow. When the user submits a message, the following flow occurs:

- Gets the uploaded image file (if present) and encodes as Base64

- Creates and displays the user's message in the chat, including the uploaded image

- Prepares an assistant message container with a "Typing..." indicator

- Adds the user's message to the message history array in Responses API format

- Sends a

fetchPOST request to the/chat/streamendpoint with the message history and context (including the Base64 encoded image and filename) - Processes the streamed JSON-lines response to display each text delta incrementally

- Handles any errors during streaming

- Adds a speech output button after receiving the complete response so users can hear the response

- Clears the input field and returns focus for the next message

form.addEventListener("submit", async function(e) {

e.preventDefault();

// Hide the no-messages-heading when a message is added

document.getElementById("no-messages-heading").style.display = "none";

const file = document.getElementById("file").files[0];

const fileData = file ? await toBase64(file) : null;

const message = messageInput.value;

const userTemplateClone = userTemplate.content.cloneNode(true);

userTemplateClone.querySelector(".message-content").innerText = message;

if (file) {

const img = document.createElement("img");

img.src = fileData;

userTemplateClone.querySelector(".message-file").appendChild(img);

}

targetContainer.appendChild(userTemplateClone);

const assistantTemplateClone = assistantTemplate.content.cloneNode(true);

let messageDiv = assistantTemplateClone.querySelector(".message-content");

targetContainer.appendChild(assistantTemplateClone);

messages.push({

"role": "user",

"content": [{"type": "input_text", "text": message}]

});

try {

messageDiv.scrollIntoView();

const response = await fetch("/chat/stream", {

method: "POST",

headers: {"Content-Type": "application/json"},

body: JSON.stringify({

messages: messages,

context: {

file: fileData,

file_name: file ? file.name : null

}

})

});

if (!response.ok || !response.body) {

throw new Error(`Request failed (${response.status})`);

}

let answer = "";

for await (const chunk of readNDJSONStream(response.body)) {

if (chunk.type === "error" || chunk.type === "response.failed") {

messageDiv.innerHTML = "Error: " + (chunk.error || "Unknown error");

break;

}

if (chunk.type === "response.output_text.delta") {

// Clear out the DIV if its the first answer chunk we've received

if (answer == "") {

messageDiv.innerHTML = "";

}

answer += chunk.delta;

messageDiv.innerHTML = converter.makeHtml(answer);

messageDiv.scrollIntoView();

}

}

messages.push({

"role": "assistant",

"content": [{"type": "output_text", "text": answer}]

});

messageInput.value = "";

const speechOutput = document.createElement("speech-output-button");

speechOutput.setAttribute("text", answer);

messageDiv.appendChild(speechOutput);

messageInput.focus();

} catch (error) {

messageDiv.innerHTML = "Error: " + error;

}

});

Handling the image with the backend

In the src\quartapp\chat.py file, the backend code for image handling starts after configuring keyless authentication.

Note

For more information on how to use keyless connections for authentication and authorization to Azure OpenAI, check out the Get started with the Azure OpenAI security building block Microsoft Learn article.

Authentication configuration

The configure_openai() function sets up the OpenAI client before the app starts serving requests. It uses Quart's @bp.before_app_serving decorator to configure authentication based on environment variables. This flexible system lets developers work in different contexts without changing code.

Authentication modes explained

- Local development (

OPENAI_HOST=local): Connects to a local OpenAI-compatible API service (like Ollama or LocalAI) without authentication. Use this mode for testing without internet or API costs. - Azure OpenAI with API key (

AZURE_OPENAI_KEY_FOR_CHATVISIONenvironment variable): Uses an API key for authentication. Avoid this mode in production because API keys require manual rotation and pose security risks if exposed. Use it for local testing inside a Docker container without Azure CLI credentials. - Production with Managed Identity (

RUNNING_IN_PRODUCTION=true): UsesManagedIdentityCredentialto authenticate with Azure OpenAI through the container app's managed identity. This method is recommended for production because it removes the need to manage secrets. Azure Container Apps automatically provide the managed identity and grant permissions during deployment via Bicep. - Development with Azure CLI (default mode): Uses

AzureDeveloperCliCredentialto authenticate with Azure OpenAI using locally signed-in Azure CLI credentials. This mode simplifies local development without managing API keys.

Key implementation details

- The

get_bearer_token_provider()function refreshes Azure credentials and uses them as bearer tokens. - The Azure OpenAI endpoint path ends with

/openai/v1/, the generally available OpenAI-compatible endpoint for Microsoft Foundry Models. - The function is async, since Quart is an asynchronous web app framework. Quart lets request handlers be async, so while the app is awaiting slow LLM API responses, the server can keep handling other requests.

Here's the complete authentication setup code from chat.py:

@bp.before_app_serving

async def configure_openai():

bp.model_name = os.getenv("OPENAI_MODEL", "gpt-4o")

openai_host = os.getenv("OPENAI_HOST", "azure")

if openai_host == "local":

bp.openai_client = AsyncOpenAI(api_key="no-key-required", base_url=os.getenv("LOCAL_OPENAI_ENDPOINT"))

current_app.logger.info("Using local OpenAI-compatible API service with no key")

elif os.getenv("AZURE_OPENAI_KEY_FOR_CHATVISION"):

bp.openai_client = AsyncOpenAI(

base_url=os.environ["AZURE_OPENAI_ENDPOINT"].rstrip("/") + "/openai/v1",

api_key=os.getenv("AZURE_OPENAI_KEY_FOR_CHATVISION"),

)

current_app.logger.info("Using Azure OpenAI with key")

elif os.getenv("RUNNING_IN_PRODUCTION"):

client_id = os.environ["AZURE_CLIENT_ID"]

azure_credential = ManagedIdentityCredential(client_id=client_id)

token_provider = get_bearer_token_provider(azure_credential, "https://cognitiveservices.azure.com/.default")

bp.openai_client = AsyncOpenAI(

base_url=os.environ["AZURE_OPENAI_ENDPOINT"].rstrip("/") + "/openai/v1",

api_key=token_provider,

)

current_app.logger.info("Using Azure OpenAI with managed identity credential for client ID %s", client_id)

else:

tenant_id = os.environ["AZURE_TENANT_ID"]

azure_credential = AzureDeveloperCliCredential(tenant_id=tenant_id)

token_provider = get_bearer_token_provider(azure_credential, "https://cognitiveservices.azure.com/.default")

bp.openai_client = AsyncOpenAI(

base_url=os.environ["AZURE_OPENAI_ENDPOINT"].rstrip("/") + "/openai/v1",

api_key=token_provider,

)

current_app.logger.info("Using Azure OpenAI with az CLI credential for tenant ID: %s", tenant_id)

Chat handler function

The chat_handler() function processes chat requests sent to the /chat/stream endpoint. It receives a POST request with a JSON payload.

The JSON payload includes:

- messages: A list of conversation history. Each message has a

role("user" or "assistant") andcontent(an array of content parts using the Responses API input format). - context: Extra data for processing, including:

- file: Base64-encoded image data (for example,

data:image/png;base64,...). - file_name: The uploaded image's original filename (useful for logging or identifying the image type).

- file: Base64-encoded image data (for example,

The handler extracts the message history and image data. If no image is uploaded, the image value is null, and the code handles this case.

@bp.post("/chat/stream")

async def chat_handler():

request_json = await request.get_json()

request_messages = request_json["messages"]

# Get the base64 encoded image from the request context

# This will be None if no image was uploaded

image = request_json["context"]["file"]

Building the input array for vision requests

The response_stream() function prepares the input array that is sent to the Azure OpenAI Responses API. The @stream_with_context decorator keeps the request context while streaming the response.

Input preparation logic

- Start with conversation history: The function begins with

all_input, which includes all previous messages except the most recent one (request_messages[0:-1]). Messages are already in Responses API format from the frontend. - Handle the current user message based on image presence:

- With image: Append an

input_imagecontent part to the user's existing content array. - Without image: Append the user's message as-is.

- With image: Append an

@stream_with_context

async def response_stream():

# This sends all messages, so API request may exceed token limits

all_input = list(request_messages[0:-1])

# Add the current user message, appending image if provided

if image:

user_content = request_messages[-1]["content"] + [{"type": "input_image", "image_url": image}]

all_input.append({"role": "user", "content": user_content})

else:

all_input.append(request_messages[-1])

Next, bp.openai_client.responses.create calls the Azure OpenAI Responses API and streams the response. The store=False parameter instructs the API to not store responses on the server, making the call stateless.

openai_stream = await bp.openai_client.responses.create(

model=bp.model_name,

input=all_input,

stream=True,

temperature=0.3,

store=False,

)

Finally, the response is streamed back to the client. The Responses API emits many event types, but only the response.output_text.delta event is needed for streaming generated text. Error events are logged and forwarded to the frontend.

try:

async for event in openai_stream:

if event.type == "response.output_text.delta":

yield json.dumps({"type": event.type, "delta": event.delta}, ensure_ascii=False) + "\n"

elif event.type in ("response.failed", "error"):

current_app.logger.error("Responses API error: %s", event)

yield json.dumps({"type": event.type}, ensure_ascii=False) + "\n"

except Exception as e:

current_app.logger.exception("Error in response stream")

yield json.dumps({"error": str(e)}, ensure_ascii=False) + "\n"

return Response(response_stream())

Frontend libraries and features

The frontend uses modern browser APIs and libraries to create an interactive chat experience. Developers can customize the interface or add features by understanding these components:

Speech Input/Output: Custom web components use the browser's Speech APIs:

<speech-input-button>: Converts speech to text using the Web Speech API'sSpeechRecognition. It provides a microphone button that listens for voice input and emits aspeech-input-resultevent with the transcribed text.<speech-output-button>: Reads text aloud using theSpeechSynthesisAPI. It appears after each assistant response with a speaker icon, letting users hear the response.

Why use browser APIs instead of Azure Speech Services?

- No cost - runs entirely in the browser

- Instant response - no network latency

- Privacy - voice data stays on the user's device

- No need for extra Azure resources

These components are in

src/quartapp/static/speech-input.jsandspeech-output.js.Image Preview: Displays the uploaded image in the chat before analysis submission for confirmation. The preview updates automatically when a file is selected.

fileInput.addEventListener("change", async function() { const file = fileInput.files[0]; if (file) { const fileData = await toBase64(file); imagePreview.src = fileData; imagePreview.style.display = "block"; } });Bootstrap 5 and Bootstrap Icons: Provides responsive UI components and icons. The app uses the Cosmo theme from Bootswatch for a modern look.

Template-based Message Rendering: Uses HTML

<template>elements for reusable message layouts, ensuring consistent styling and structure.

Other sample resources to explore

In addition to the chat app sample, there are other resources in the repo to explore for further learning. Check out the following notebooks in the notebooks directory:

| Notebook | Description |

|---|---|

| chat_pdf_images.ipynb | This notebook demonstrates how to convert PDF pages to images and send them to a vision model for inference. |

| chat_vision.ipynb | This notebook is provided for manual experimentation with the vision model used in the app. |

Localized Content: Spanish versions of the notebooks are in the notebooks/Spanish/ directory, offering the same hands-on learning for Spanish-speaking developers. Both English and Spanish notebooks show:

- How to call vision models directly for experimentation

- How to convert PDF pages to images for analysis

- How to adjust parameters and test prompts

Clean up resources

Clean up Azure resources

The Azure resources created in this article are billed to your Azure subscription. If you don't expect to need these resources in the future, delete them to avoid incurring more charges.

To delete the Azure resources and remove the source code, run the following Azure Developer CLI command:

azd down --purge

Clean up GitHub Codespaces

Deleting the GitHub Codespaces environment ensures that you can maximize the amount of free per-core hours entitlement you get for your account.

Important

For more information about your GitHub account's entitlements, see GitHub Codespaces monthly included storage and core hours.

Sign into the GitHub Codespaces dashboard.

Locate your currently running Codespaces sourced from the

Azure-Samples//openai-chat-vision-quickstartGitHub repository.Open the context menu for the codespace and select Delete.

Get help

Log your issue to the repository's Issues.